YOLO26 Out-of-the-Box Support

We are excited to announce that with the release of SDK 2.2, MemryX adds out-of-the-box support for the newest addition to the Ultralytics family: YOLO26.

What's YOLO?

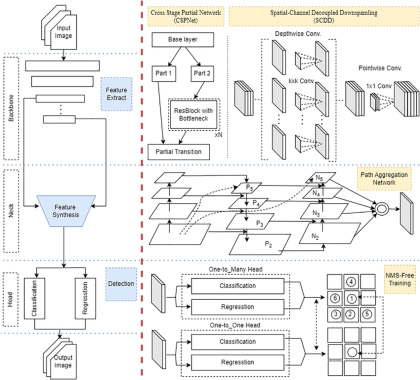

YOLO, short for You Only Look Once, refers to a family of single-shot computer vision models that began with the 2015 paper You Only Look Once: Unified, Real-Time Object Detection. The original focus was object detection, but the broader YOLO ecosystem has since expanded well beyond that into tasks like segmentation, pose estimation, oriented bounding boxes, and classification. Today, “YOLO” describes not just one model, but a fast-moving family of architectures built around the same real-time vision philosophy.

Major reason YOLO became so widely adopted is usability. In particular, Ultralytics helped make modern YOLO models accessible to a much broader developer audience through a simple framework for training, exporting, and running pretrained models. That ease of use is a big part of why YOLO models are now common across robotics, edge AI, video analytics, and embedded vision workloads.

Current Popular YOLO Versions

The computer vision landscape moves fast, and several YOLO model families coexist and are widely used today:

- YOLOv8: A family of models introduced by Ultralytics and derived from YOLOv7. They include multiple model variants and support multiple tasks such as segmentation and pose estimation, in addition to standard object detection.

- YOLOv9: helped popularize end-to-end, NMS-free inference in the modern YOLO line [paper].

- YOLOv10: introduced NMS-free training and the PSA (Partial-Self-Attention) block [paper].

- YOLO11: a family of models introduced by Ultralytics and derived from YOLOv10. They include multiple model variants and support multiple tasks such as segmentation and pose estimation, in addition to standard object detection.

- YOLO26: is the latest Ultralytics release, positioned for edge and low-power deployment, with support for detection, segmentation, pose, oriented detection, and classification.

- YOLO-NAS: a new YOLO architecture derived using NAS (neural architecture search) [paper ].

- YOLO-E: Implemented a zero-shot, promptable YOLO model which was designed for open-vocabulary detection and segmentation [paper].

- YOLOP: a YOLO architecture tuned to perform panoptic driving perception network to perform traffic object detection, drivable area segmentation, and lane detection simultaneously [paper].

YOLO26 - the latest iterations

Ultralytics describes YOLO26 as the latest evolution in its YOLO series, engineered for edge and low-power devices. Building on the end-to-end, NMS-free direction established in YOLOv10, YOLO26 introduces targeted improvements aimed at deployment simplicity, training efficiency, and task-specific accuracy

The supported task families include detection, instance segmentation, pose/keypoints, oriented bounding boxes, and classification.

It matters for MemryX users because YOLO26 is exactly the kind of model developers want to bring up quickly on accelerator hardware: modern, widely recognized, exportable through standard formats, and available across multiple task variants. The easier it is to take a mainstream model family from upstream source to deployed inference, the better the overall developer experience.

Out-of-the-box Model Support

MemryX accelerators were built from day one around a simple goal: bring your trained model and compile it without a special vendor-specific workflow. The MemryX Neural Compiler generates a DFP that runs on MXA hardware, and the public compiler documentation supports models from frameworks including ONNX, TFLite, Keras, and TensorFlow.

That means the default MemryX story is not “first rewrite your model for us,” but rather “start with the model you already have and compile it.” This philosophy is important. Yes, developers can still prune, quantize, distill, or otherwise optimize a model if they want to trade extra effort or some accuracy for more performance. But with MemryX, that is an option, not a requirement.

The Model eXplorer messaging makes the same point explicitly: publicly listed models are compiled and tested on MemryX hardware as they are, and users can choose to modify them later if they want to explore additional tradeoffs. other words, the default workflow stays simple: export the model, compile it, benchmark it, and deploy it.

Just use the ONNX model as-is, no modifications, no quantizing.

What changed in SDK 2.2?

The SDK 2.2 release is important not just because it adds another YOLO family, but because it improves the compiler support underneath it.

That compiler work matters especially for newer YOLO-style architectures that lean on blocks which were technically supported before (like the partial self-attention blocks ), but not always mapped efficiently. In earlier SDK versions, some attention-heavy portions of the modern YOLO family could become a performance bottleneck on MX3. With SDK 2.2, MemryX has substantially improved how those blocks map to the hardware, which is why several models now reach performance levels that previously required special MXA-optimized variants.

This is also why some MXA-Optimized YOLO11 entries introduced in SDK 2.1 are no longer needed: in a number of cases, the original models now achieve similar or better performance without any model modifications. A few exceptions still benefit from separate MXA-optimized versions, so those remain available.

YOLO26 support in practice

The SDK 2.2 results show that MemryX support for YOLO26 is not limited to one demo model. The current set covers 41 YOLO26 variants across multiple task families and resolutions: object detection, oriented bounding boxes, segmentation, pose estimation, and classification.

New example app: Object Detection Using YOLO26

To make getting started easier, SDK 2.2 adds a new example application that demonstrates real-time object detection with yolo26 on MemryX accelerators. It offers a quick path to trying YOLO26 on MX3, with additional guidance available in the MemryX Developer Hub tutorial.